If you work in healthcare, financial services, payments, or government contracting, you already know that code review is not optional. Regulatory frameworks require it. Auditors check for it. The question is not whether to do code review, but what level of review actually satisfies your compliance obligations – and whether the review you are doing for compliance is also making your code better.

Too often, the answer is no. Compliance-driven code review becomes a checkbox exercise: every PR gets a rubber-stamp approval, the audit trail shows that reviews happened, and nobody actually looked closely at the code. The organisation passes the audit while the codebase accumulates the same defects it would have accumulated without review.

It does not have to be this way. Understanding what each framework actually requires – rather than what teams assume it requires – is the first step towards review processes that satisfy auditors and improve software quality at the same time.

What the frameworks actually require

HIPAA (Health Insurance Portability and Accountability Act) does not prescribe specific code review practices. What it requires, under the Security Rule, is that covered entities implement policies and procedures to prevent, detect, contain, and correct security violations. The technical safeguard requirements include access controls, audit controls, integrity controls, and transmission security. Code review is not explicitly mentioned, but demonstrating that your development process includes review of code that handles protected health information (PHI) is a strong way to satisfy the audit controls and integrity requirements. An auditor will look for evidence that security-sensitive code changes are reviewed before deployment.

SOC 2 (Service Organization Control 2) evaluates controls across five trust service criteria: security, availability, processing integrity, confidentiality, and privacy. Under the common criteria for change management (CC8.1), organisations must demonstrate that changes to infrastructure, data, software, and procedures are authorised, designed, developed, configured, documented, tested, approved, and implemented. Code review fits into the “approved” stage. Auditors typically expect to see that code changes go through a review process with documented approval before being merged to production branches. The specific mechanism – pull request approvals, formal code review sessions, or automated checks – is flexible, but the evidence must be traceable.

PCI-DSS (Payment Card Industry Data Security Standard) is more prescriptive. Requirement 6 deals with developing and maintaining secure systems and software. Specifically, PCI-DSS requires that code changes are reviewed by individuals other than the originating code author, and that reviewers are knowledgeable in code review techniques and secure coding practices. The standard explicitly calls for review of custom code to identify potential vulnerabilities before code is released to production. This is one of the few frameworks that explicitly mandates peer code review as a control.

GDPR (General Data Protection Regulation) does not mention code review directly. Article 25 requires data protection by design and by default, and Article 32 requires appropriate technical and organisational measures to ensure a level of security appropriate to the risk. Code review that evaluates how personal data is handled, stored, and transmitted is a practical demonstration of these obligations. Organisations that process personal data should be able to show auditors that code changes affecting data processing are reviewed for compliance with data protection principles.

FedRAMP (Federal Risk and Authorization Management Program) requires that cloud service providers implement NIST 800-53 security controls. The relevant controls include CM-3 (configuration change control), which requires documentation and approval of changes, and SA-11 (developer security testing), which requires developers to create and implement a security assessment plan that includes code review. FedRAMP is among the most demanding frameworks, and evidence of systematic, documented code review is a baseline expectation.

The compliance checkbox problem

The common thread across these frameworks is that they require evidence of review, not a specific quality of review. This creates a perverse incentive. The easiest way to satisfy an auditor is to ensure that every pull request has at least one approval from someone other than the author. Whether that approval reflects ten seconds of glancing at the diff or two hours of careful analysis, the audit trail looks the same.

This is the compliance checkbox problem. The audit passes. The control is documented. The review happened in name. But the codebase is no better for it. The security vulnerabilities, architectural inconsistencies, and data handling errors that a thorough review would catch remain in the code, creating the risks the framework was designed to prevent.

Checkbox review is not just wasteful. It is actively harmful because it creates a false sense of security. The team believes their code is reviewed. Management reports that the review process is in place. The auditor confirms that the control is operating effectively. And the SQL injection vulnerability in the payment processing module goes undetected because the reviewer approved the PR in thirty seconds.

Bridging compliance and quality

The solution is not to abandon compliance-driven review. It is to make the review that satisfies compliance also be the review that catches real problems. Several practices help.

Review checklists tied to regulatory requirements. Rather than reviewing code against generic quality standards, create checklists specific to your regulatory obligations. For HIPAA-covered code, the checklist should include: does this change handle PHI? If so, is it encrypted in transit and at rest? Are access controls enforced? Is there an audit trail? For PCI-DSS, the checklist should reference the specific secure coding requirements in the standard. A checklist transforms review from a vague quality exercise into a structured compliance verification.

Categorise code by regulatory exposure. Not all code in a regulated environment requires the same level of review. A change to the marketing website's CSS does not have the same compliance implications as a change to the authentication module or the payment processing pipeline. Categorising code modules by their regulatory exposure allows you to apply stricter review requirements where they matter and lighter requirements where they do not.

Require review depth proportional to risk. For code that handles regulated data, require not just a PR approval but documented evidence of what the reviewer checked. This could be a completed checklist, comments explaining the review findings, or a structured review report. The additional documentation serves dual purposes: it satisfies auditors who want to see that reviews are substantive, and it creates accountability that discourages rubber-stamping.

Use automated analysis as a baseline. Linting, static analysis, and AI-powered code review can catch entire categories of issues before a human reviewer looks at the code. This is not a replacement for human review – PCI-DSS explicitly requires human peer review – but it ensures that the human reviewer is not wasting time on issues that a tool could have caught. The human reviewer can focus on the questions that require judgement: is this the right approach? Does this handle edge cases? Is this consistent with our data handling policies?

Exportable reports as compliance evidence

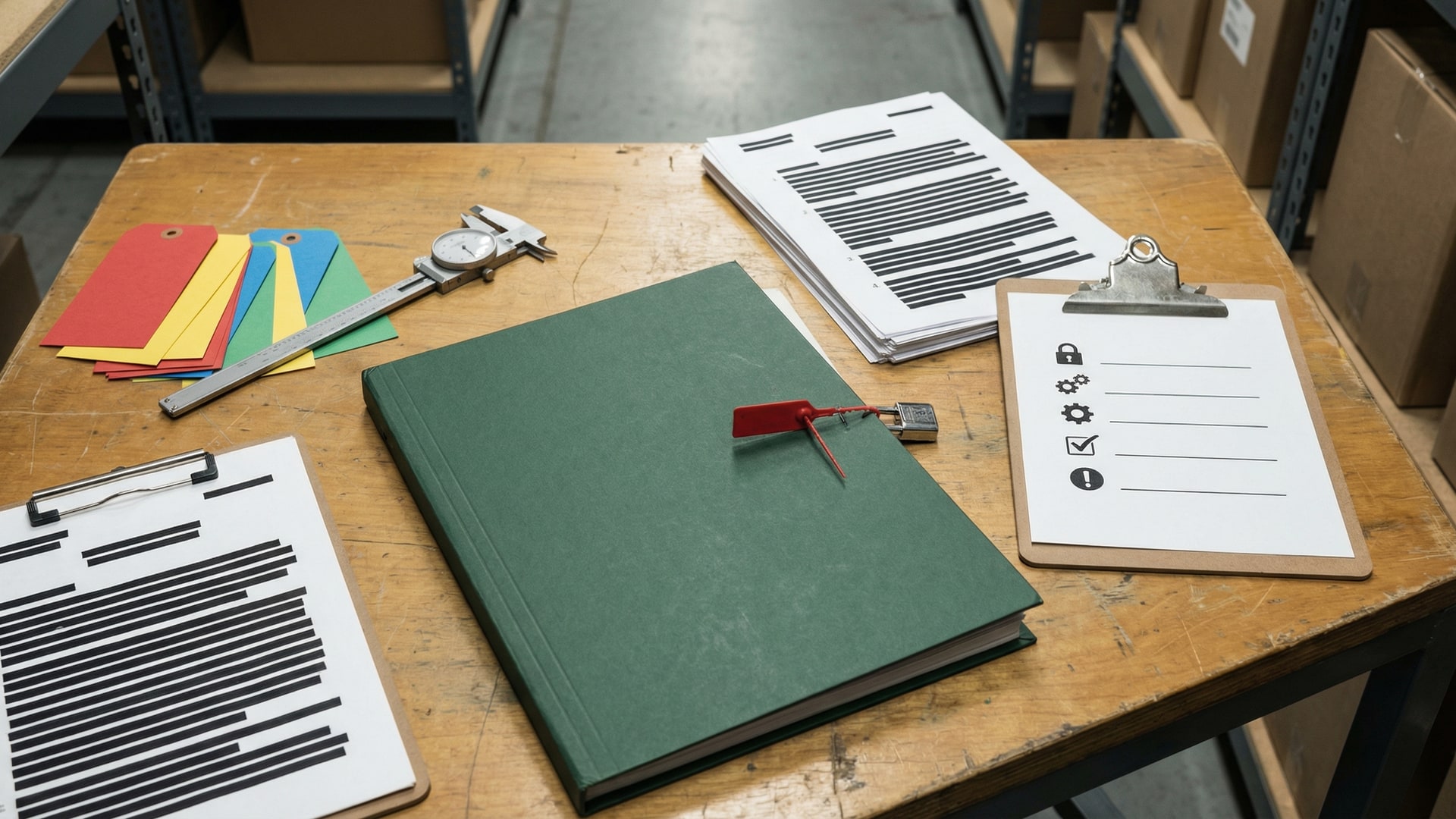

Auditors do not want to log into your GitHub account and read pull request comments. They want a document they can include in their workpapers. The more structured and accessible your review evidence is, the smoother the audit process.

Effective compliance evidence for code review includes the date of the review, the reviewer's identity, the scope of the code reviewed, the findings identified, and the disposition of each finding (fixed, accepted, or deferred with a documented reason). Pull request comments can provide some of this information, but they are scattered across dozens of PRs and require effort to aggregate.

A consolidated review report that covers the entire codebase or a specific module is far more useful as compliance evidence. It provides a snapshot of the state of the code at a point in time, including what was reviewed, what was found, and what was done about it.

VibeRails generates exportable reports in formats suitable for compliance documentation. Each report includes the scope of the review, the findings categorised by severity and type, and the status of each finding. The reports can be exported as HTML or included as evidence in audit packages. For teams in regulated industries, the report serves double duty: it is the engineering team's improvement plan and the compliance team's audit evidence, generated from the same review process.

Making compliance review worth the effort

The fundamental insight is that compliance review and quality review are not separate activities that happen to overlap. They are the same activity, done at different levels of rigour. A review process that only satisfies the auditor is a waste of engineering time. A review process that genuinely improves code quality will almost always satisfy the auditor as a side effect.

Start by understanding what your specific framework actually requires. Map those requirements to concrete review practices. Automate the mechanical parts. Focus human review time on the questions that require judgement and domain knowledge. Document the results in a format that serves both engineering and compliance needs.

The goal is not to do two kinds of review – one for the auditor and one for the code. It is to do one kind of review, done thoroughly, that serves both purposes. That is not just more efficient. It is the only approach that actually reduces the risk the regulatory framework was designed to address.

Limits and tradeoffs

- It can miss context. Treat findings as prompts for investigation, not verdicts.

- False positives happen. Plan a quick triage pass before you schedule work.

- Privacy depends on your model setup. If you use a cloud model, relevant code is sent to that provider; local models can keep inference on your own hardware.