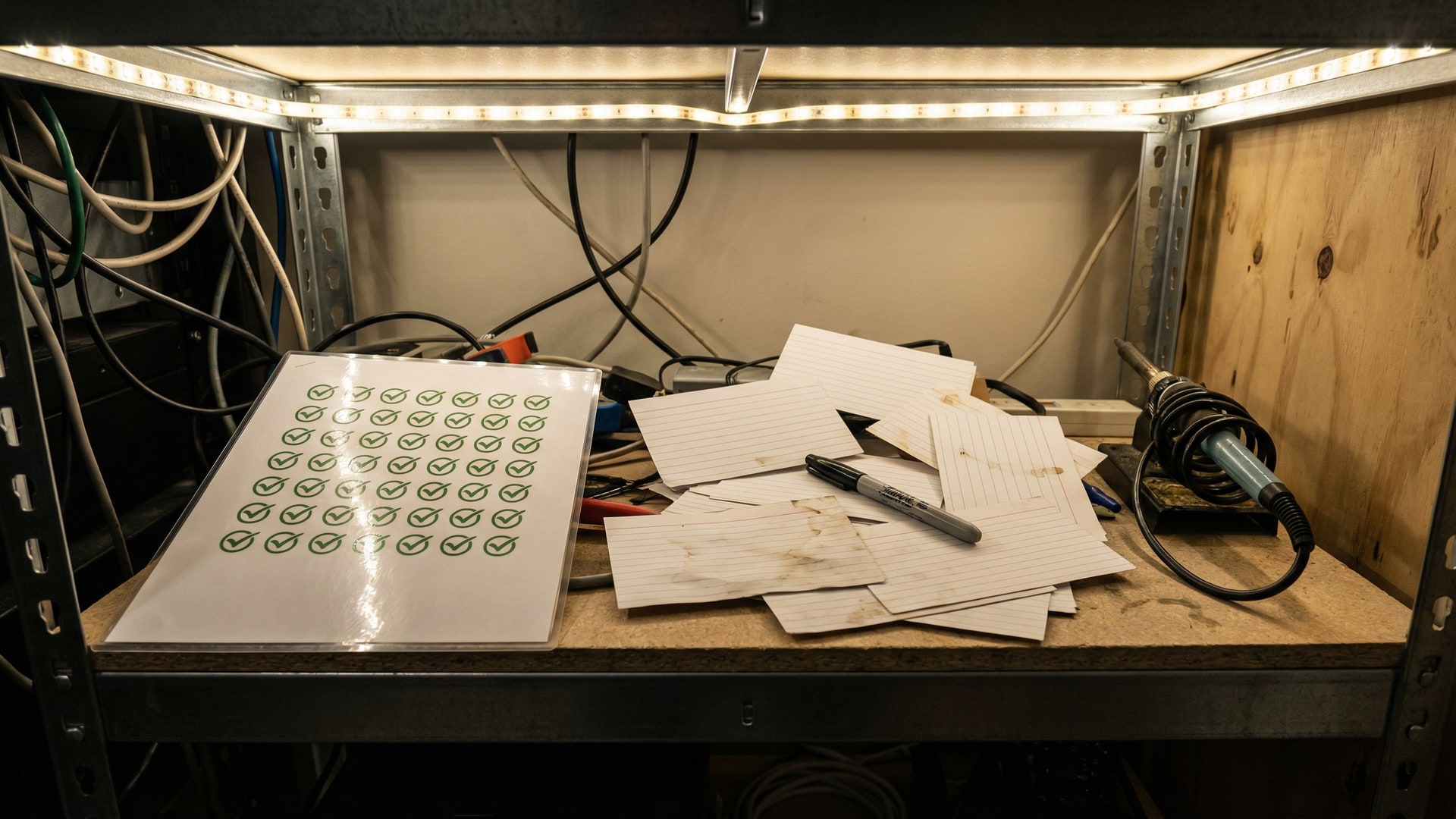

Your SonarQube dashboard shows 0 critical issues. Green across the board. Ship it.

Meanwhile, your codebase has three incompatible session management approaches. There's a homegrown ORM that silently swallows connection errors. The authentication flow technically works, but it violates every security principle in the OWASP top 10 – not because of a specific vulnerability that a scanner would flag, but because the overall architecture doesn't make sense. The session tokens are stored in localStorage in one module and httpOnly cookies in another. The password reset flow bypasses the rate limiter because it was built before the rate limiter existed.

None of this shows up on your dashboard. Every rule is passing. The codebase is green.

Rules catch rule violations. They don't catch bad architecture.

Static analysis tools are excellent at what they do. They match code patterns against a database of known issues. Unused variable? Caught. Unchecked null dereference? Caught. SQL string concatenation instead of parameterized queries? Caught. These tools have prevented thousands of real bugs from reaching production, and they deserve every bit of adoption they've earned.

But the hardest problems in legacy codebases aren't rule violations. They're architectural decisions that made sense five years ago and don't anymore. They're patterns that emerged organically as different teams solved the same problem different ways. They're the kind of issues that a senior developer would notice during a thorough code review but that no rule database contains, because they're not violations of any specific rule – they're violations of coherence.

Consider what a rule-based tool can't express:

- Your project uses three different logging frameworks – Winston in the API layer, Bunyan in the background workers, and raw

console.login the utilities. None of these are wrong individually. Together, they mean your observability story is fragmented, your log formats are inconsistent, and debugging a request that crosses module boundaries requires checking three different outputs. - Half your async code uses callbacks and the other half uses Promises. Someone started a migration two years ago and got halfway through. The result is that error handling behaves differently depending on which half of the codebase you're in, and the boundary between the two halves is where bugs live.

- There are 47 feature flags in the configuration. Twelve of them control features that shipped to all users over a year ago. Eight of them reference A/B tests that ended. Three of them are checked in code but never set anywhere. Nobody is confident enough to remove any of them because nobody knows what they all do.

A static analyzer will not flag any of these. They're not rule violations. They're the kind of accumulated complexity that makes codebases hard to work in, slow to change, and expensive to maintain.

What "review" actually means

When a skilled developer reviews a codebase – not a diff, but an actual codebase – they don't check rules. They build a mental model. They read file after file, accumulating context. They start to notice patterns: how errors are handled here versus there, which conventions are followed consistently and which ones aren't, where the boundaries between modules are clean and where they're tangled.

They notice things that don't belong. A database query in a view template. Business logic in a utility function. A retry mechanism that's been reimplemented four times in four different files. Configuration values that are hardcoded in some places and pulled from environment variables in others.

This kind of review produces something that no dashboard can: a narrative understanding of what's wrong with the codebase and why. Not a list of line-level issues, but an assessment of the system as a whole.

The problem is that this review takes days or weeks of expensive human time. So it almost never happens. The codebase grows, the inconsistencies accumulate, and the dashboard stays green.

This is the kind of reasoning that large language models can now do at scale. An LLM can read files sequentially, accumulate context across hundreds of files, and reason about the relationships between them. It doesn't check whether a line violates a rule. It asks whether the code, taken as a whole, makes sense.

VibeRails fills the gap

VibeRails is a desktop application that orchestrates Claude Code and Codex CLI to perform full-codebase code review. It reads every file in your project, reasons about the whole, and surfaces the issues that live between the lines – the ones that rule-based tools were never designed to find.

The analysis covers 17 detection categories that go beyond what pattern matching can express: security vulnerabilities, performance bottlenecks, bug risks, dead code, complexity hotspots, type safety gaps, error handling weaknesses, API design issues, accessibility problems, observability gaps, concurrency risks, data integrity concerns, internationalisation issues, dependency problems, documentation gaps, testing deficiencies, and maintainability smells.

Triage mode lets you process findings efficiently – accept, reject, or defer each one with keyboard shortcuts. Fix sessions dispatch AI to implement the changes directly in your local repository. You review the diff, test, and commit.

VibeRails uses the BYOK model: it orchestrates your existing AI subscriptions rather than proxying requests through a VibeRails cloud service. Your API keys stay local, and your code isn't uploaded to VibeRails servers. You're not paying a middleman to resell you access to AI you already have.

Static analysis is necessary. It's just not sufficient. The green dashboard tells you that your code follows the rules. It doesn't tell you whether the rules are the right ones, or whether the code that follows them makes sense as a system.

Try VibeRails free – up to 5 issues per review session, no signup required.

Limits and tradeoffs

- It can miss context. Treat findings as prompts for investigation, not verdicts.

- False positives happen. Plan a quick triage pass before you schedule work.

- Privacy depends on your model setup. If you use a cloud model, relevant code is sent to that provider; local models can keep inference on your own hardware.