Ask any engineering team whether they do code review and the answer is almost always yes. They review pull requests. They have review checklists. They require approvals before merging. Code review is a solved problem.

Except it is not. Because there is a fundamental gap between reviewing individual changes and reviewing the codebase as a whole. PR review ensures that each new change is reasonable in isolation. It does not ensure that the codebase, as a system, is coherent. And over the life of a project – hundreds of developers, thousands of pull requests, years of incremental changes – the system-level problems accumulate.

Nobody reviews the whole codebase. There are good reasons for this. There have been good reasons for this. But the reasons are changing.

Why nobody reviews the whole codebase

Three practical barriers have historically prevented full-codebase review.

It is too expensive. A manual review of a 500,000-line codebase by a senior engineer takes weeks. At senior developer rates, that is tens of thousands of pounds in direct cost, plus the opportunity cost of having a senior person unavailable for feature work. For most organisations, this cost is difficult to justify against uncertain returns.

It is too slow. Even if you could justify the cost, a multi-week review produces findings that are immediately stale. By the time the reviewer finishes reading module 47, the team has already shipped changes to modules 1 through 20. The review is a snapshot of a system that no longer exists.

Who would do it? A full-codebase review requires someone who understands the entire system, not just their own corner of it. In most organisations, that person does not exist. The codebase has been built by dozens of developers over years. No single person holds the complete context. Even if they did, they have other responsibilities.

These are not trivial objections. They are the reason that full-codebase review has been a theoretical ideal rather than a practical activity for the entire history of software development.

What PR review misses

PR review is excellent at what it does. It catches bugs in new code, validates logic, enforces standards, and shares knowledge across the team. These are genuine, important benefits.

But PR review has a structural limitation: its scope is the diff. A reviewer sees what changed. They do not see, and are not expected to see, how that change relates to the other 499,990 lines of code. This means several categories of issues are systematically invisible to PR review.

Systemic patterns. A codebase might have three different approaches to configuration management, four different error handling strategies, and two different authentication mechanisms. Each approach was introduced in a PR that looked reasonable at the time. No single PR reveals the inconsistency. Only a view of the whole system shows that these patterns conflict.

Cross-module issues. Service A validates input at the API layer. Service B trusts input from Service A and skips validation. This is safe if Service A always validates correctly. It is a vulnerability if Service A's validation has a gap. Reviewing Service B in isolation, the missing validation looks like a reasonable trust assumption. Reviewing both services together reveals the fragile dependency.

Dead code. A function was used by three callers. Over time, each caller was refactored to use a different approach. The original function is now called by nobody, but it still exists. No PR removed it because no PR was about removing it – each caller was refactored independently. Dead code accumulates through subtraction of references, not addition of code, which makes it invisible to change-level review.

Architectural drift. The architecture diagram says the system uses a clean layered architecture: controllers call services, services call repositories, repositories access the database. In practice, some controllers call repositories directly. Some services access the database without going through a repository. Some utility modules import from every layer. The drift happened incrementally, one shortcut at a time, each one approved in PR review because it was expedient for that particular change.

Duplicated logic. Two teams independently implement the same business logic in different modules. Both implementations are correct. Both pass review. Neither team knows the other exists. When the business rules change, one implementation gets updated and the other does not. Now the system has inconsistent behaviour that is extremely difficult to diagnose.

The economics changed

The three barriers to full-codebase review – cost, speed, and expertise – were real constraints for human reviewers. They are not constraints for AI.

Cost. An AI-powered full-codebase scan costs a fraction of a human review. The cost scales with the size of the codebase, but even large codebases can be scanned for a tiny fraction of what a multi-week manual review would cost. The economics that made full-codebase review impractical for decades have fundamentally shifted.

Speed. A scan that would take a human reviewer weeks can be completed in hours. The findings are current, not stale. The team can act on them while the codebase is still in the state that was reviewed. Speed also enables repetition – instead of a one-off review, teams can scan regularly and track progress over time.

Scope. An AI reviewer reads every file. It does not get fatigued, does not skip modules it finds boring, and does not lose context as it moves between files. It can hold patterns in memory across the entire codebase and identify inconsistencies that span hundreds of files. This is not a replacement for human expertise – AI reviewers have their own limitations – but it is a capability that no individual human reviewer can match at scale.

The question is no longer whether full-codebase review is valuable. It always was. The question was whether it was feasible. Now it is.

What teams discover when they scan everything

Teams that run their first full-codebase scan consistently report a specific set of surprises. Not because the findings are exotic, but because they are systemic issues that were hiding in plain sight.

More inconsistency than expected. Teams that believe they have consistent patterns discover that consistency eroded over time. The style guide says one thing. The code says three different things. This is not a failing of any individual developer – it is the natural entropy of a system built by many people over time.

Dead code they thought was live. Modules that nobody uses but everybody assumed someone else depended on. Configuration options that are parsed but never read. Feature flags that were never removed after the feature was fully launched. Dead code is like dark matter – it is invisible until you specifically look for it, and there is more of it than you expect.

Security assumptions that do not hold. A common pattern: authentication is enforced at the API gateway, so internal services do not validate authentication. This works until someone adds a new internal endpoint that is accidentally exposed externally. Or until the gateway configuration is changed and the assumption breaks. Full-codebase review reveals these trust chains and their fragility.

Test coverage gaps in critical paths. The overall test coverage number might look healthy – 80 per cent, say. But a full-codebase review reveals that coverage is concentrated in the easy-to-test modules while the most critical paths – payment processing, data migration, error recovery – have minimal coverage. The aggregate number obscures the distribution.

Full-codebase review is not a replacement

Full-codebase review does not replace PR review, static analysis, or manual architecture review. It complements them by covering the territory they cannot reach.

PR review catches change-level defects. Static analysis catches rule violations. Manual architecture review validates design decisions. Full-codebase review catches the systemic issues that emerge from the interaction of hundreds of individual changes over time.

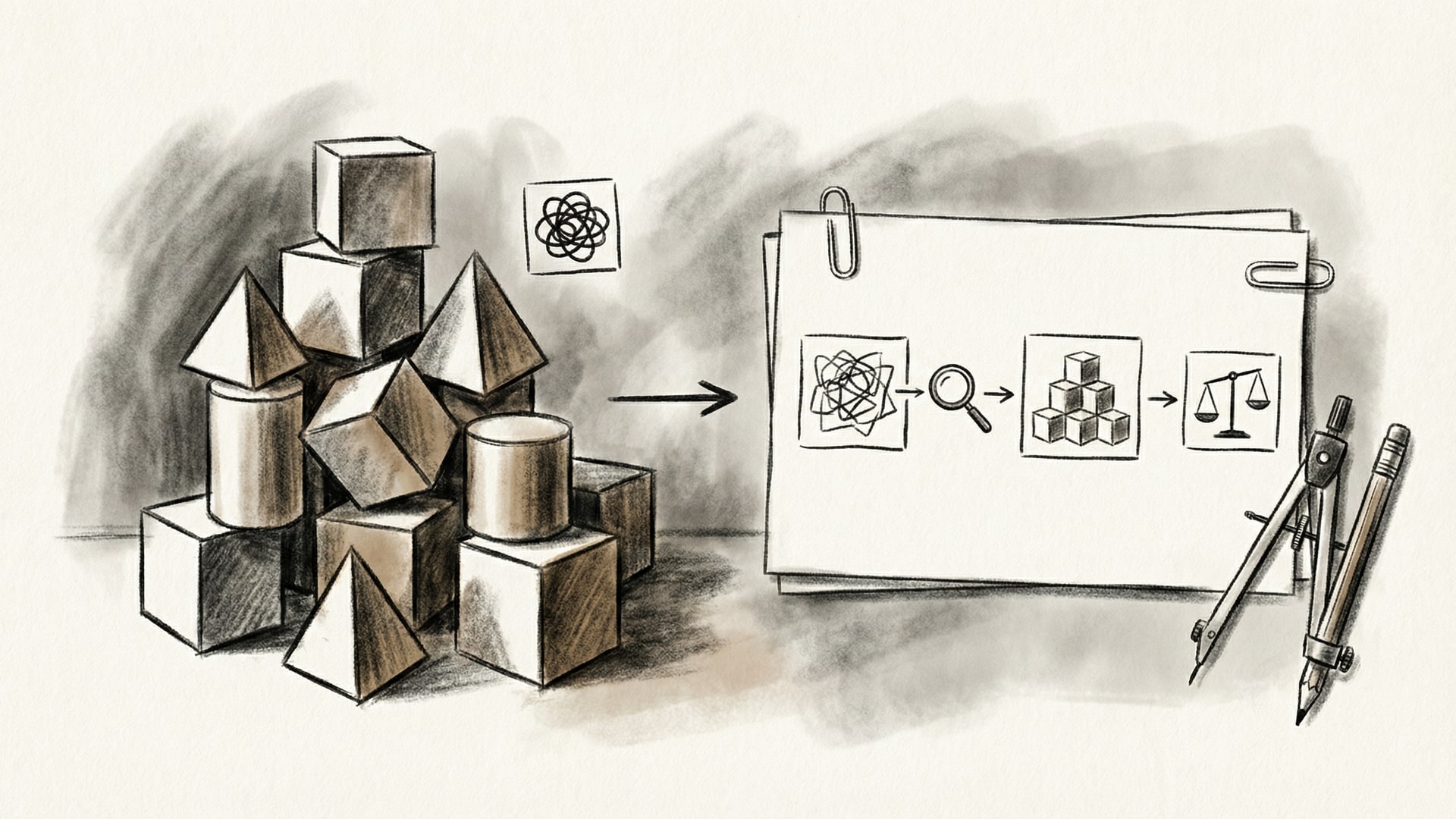

Think of it as the difference between inspecting individual bricks and assessing the structural integrity of the building. Both are necessary. The industry has been doing the first for decades. It is now practical to do the second.

Making it practical

VibeRails exists because full-codebase review should be a routine part of engineering practice, not a theoretical ideal. It scans your entire codebase, not individual changes. It identifies systemic patterns, cross-module issues, architectural drift, and dead code that PR review structurally cannot catch.

It uses your own AI subscriptions through the BYOK model. Each developer buys their own licence, but there is no AI markup in the price – and your source code is sent only to the AI provider you already use, never to VibeRails servers. It runs locally on your desktop. The scan produces a structured set of findings with severity ratings that you triage into actions, deferrals, and dismissals.

The case for full-codebase review was always strong. What was missing was a tool that made it practical. That tool now exists. The question for your team is not whether full-codebase review is valuable – it is when you will try it.