Every code quality tool reports complexity metrics. SonarQube has them. ESLint has rules for them. Your IDE probably highlights functions that exceed a threshold. The numbers appear on dashboards, in CI pipelines, and in code review comments. But surprisingly few developers can explain what these numbers actually represent, how they differ from one another, or why a function with a low complexity score can still be terrible code.

Understanding complexity metrics properly requires knowing what each one measures, what each one ignores, and where the entire category of syntactic measurement falls short. That last point is the most important, because it explains why metrics alone will never replace genuine code review.

Cyclomatic complexity: counting decision points

Cyclomatic complexity is the oldest and most widely used code complexity metric. Introduced by Thomas McCabe in 1976, it measures the number of linearly independent paths through a function. In practical terms, it counts decision points: every if, else, case, while, for, catch, &&, and || adds one to the score. A function with no branching has a cyclomatic complexity of 1. A function with a single if/else has a complexity of 2. A function with a switch statement containing ten cases has a complexity of at least 10.

The metric was designed to estimate the number of test cases needed for full branch coverage. A function with a cyclomatic complexity of 15 has at least 15 distinct execution paths, which means you need at least 15 tests to cover every branch. This makes cyclomatic complexity useful as a rough indicator of testing difficulty. Functions with high scores are harder to test thoroughly, which usually means they are also harder to understand and maintain.

Most teams set a threshold somewhere between 10 and 20. Functions exceeding the threshold are flagged for refactoring. This is a reasonable heuristic, but it has significant blind spots. A flat switch statement with 12 cases might have a cyclomatic complexity of 12, but if each case is a simple return statement, the function is trivial to understand. Meanwhile, a function with a complexity of 6 that nests three levels of conditionals with side effects in each branch can be genuinely difficult to reason about. Cyclomatic complexity treats all decision points equally, regardless of nesting depth, cognitive load, or semantic complexity.

Cognitive complexity: measuring human readability

Cognitive complexity was developed by SonarSource specifically to address the shortcomings of cyclomatic complexity. Where cyclomatic complexity asks “how many paths exist?”, cognitive complexity asks “how hard is this code for a human to read?”

The key difference is nesting. Cognitive complexity penalises nested control structures more heavily than flat ones. A sequence of five if statements at the same level adds 5 to the score. Five nested if statements add 5 + (1 + 2 + 3 + 4) = 15, because each additional level of nesting adds an incrementing penalty. This reflects the actual experience of reading code: deeply nested logic is disproportionately harder to follow than flat logic, even when the number of decision points is the same.

Cognitive complexity also handles some constructs differently from cyclomatic complexity. It does not increment for else or else if branches (since these are part of the same decision, not a new one). It does not count boolean operators when they form a single readable condition. And it gives a bonus penalty for breaks in linear flow, such as recursion or goto-equivalent constructs.

For most teams, cognitive complexity is the more useful of the two metrics. It correlates more closely with the actual difficulty of reading and modifying code. A function with a high cognitive complexity score genuinely tends to be hard to work with, whereas a function with a high cyclomatic complexity score might or might not be.

Halstead metrics: measuring vocabulary and volume

Halstead metrics take a fundamentally different approach. Instead of counting control flow structures, they analyse the vocabulary of the code: the number of distinct operators and operands, and the total number of operators and operands used. From these four base measurements, several derived metrics are calculated.

Program vocabulary is the count of distinct operators plus distinct operands. Program length is the total count of all operators and operands. Volume measures the information content of the code – how much space the program occupies in terms of its vocabulary. Difficulty estimates how hard the code is to write or understand, based on the ratio of distinct operators to distinct operands and the frequency of operand reuse. Effort combines volume and difficulty into an estimate of the mental effort required to develop or comprehend the code.

Halstead metrics are less commonly used than cyclomatic or cognitive complexity, partly because they are harder to interpret and partly because they are more sensitive to coding style. A function written with verbose variable names and explicit intermediate variables will have different Halstead scores than the same logic written with terse names and chained expressions, even though the underlying complexity is identical.

That said, Halstead effort can be a useful secondary indicator. Functions with high effort scores tend to be doing too much. And the vocabulary metric can highlight functions that juggle an unusually large number of distinct concepts, which is often a sign that the function has too many responsibilities.

Other metrics worth knowing

Beyond the big three, several other metrics appear in quality dashboards.

Lines of code (LOC) is the simplest and often the most underrated. Long functions are harder to understand than short ones, regardless of their branching structure. A 400-line function with a cyclomatic complexity of 3 is still a problem – it means the function has few decision points but an enormous amount of sequential logic, which is hard to navigate and test.

Depth of nesting measures the maximum level of indentation in a function. Even without a formal cognitive complexity calculation, deeply nested code is a red flag. If you have to track four levels of context to understand a single line, the code needs restructuring.

Fan-in and fan-out measure how many other modules call a given module (fan-in) and how many modules it calls (fan-out). High fan-out indicates a module that depends on many other parts of the system, making it fragile to changes elsewhere. High fan-in indicates a module that many other parts depend on, making it risky to modify.

Maintainability index is a composite metric that combines cyclomatic complexity, Halstead volume, and lines of code into a single score. It was popularised by Visual Studio and is widely available, though its usefulness is debated. Composite metrics can obscure the specific nature of the problem: a low maintainability index tells you something is wrong but not what.

Why metrics alone are not enough

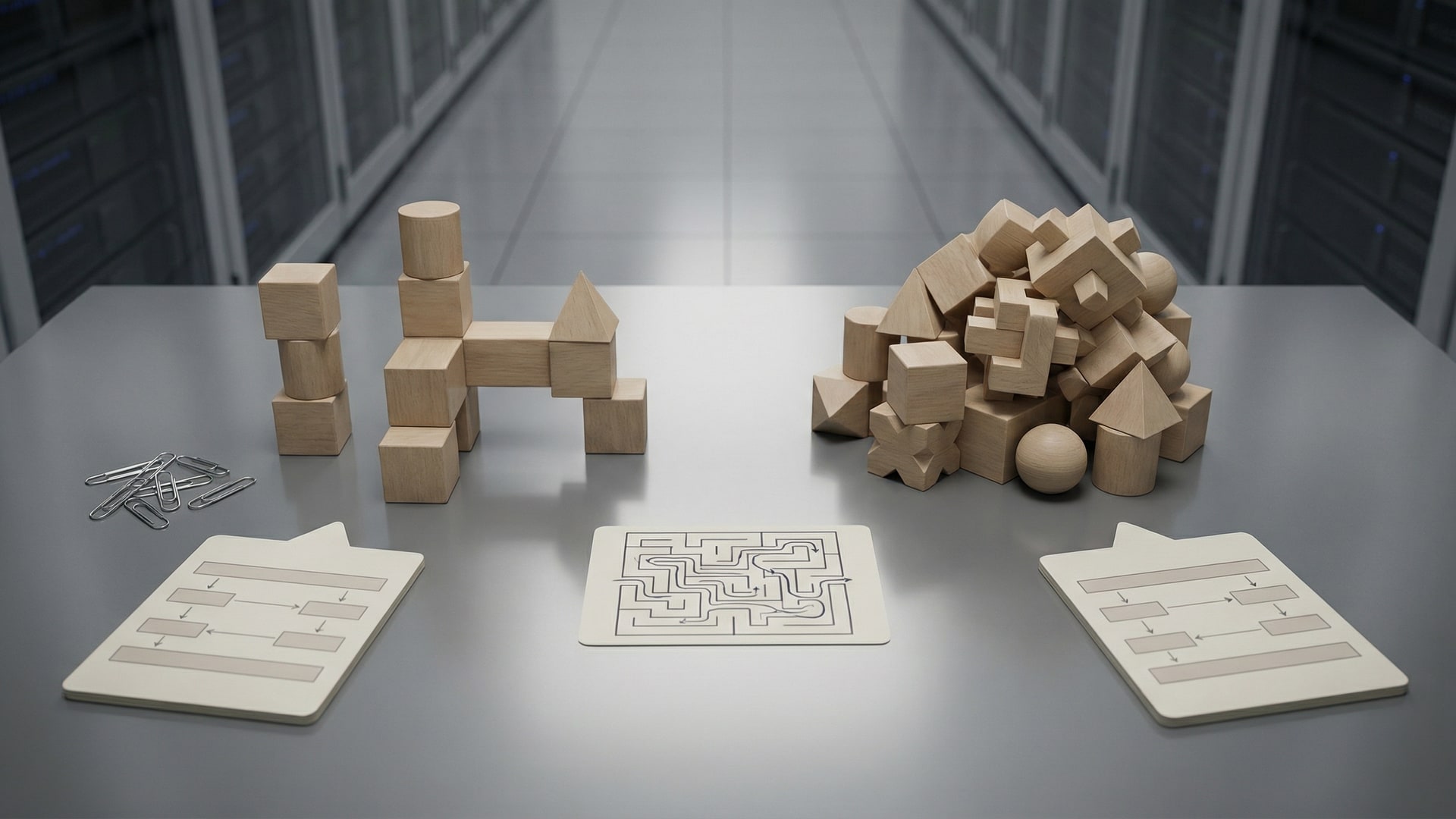

Every metric described above measures syntax. They count tokens, branches, nesting levels, and operators. They analyse the structure of the code as written. What they cannot do is evaluate the semantics – what the code actually means, whether it does the right thing, and whether its design makes sense in context.

Consider an example. A function has a cyclomatic complexity of 3, a cognitive complexity of 2, and is only 25 lines long. By every metric, it is simple and maintainable. But the function implements a caching strategy that conflicts with the caching strategy used everywhere else in the codebase. It stores session data in a way that violates the application's security model. And it silently swallows errors instead of propagating them to the caller, which means failures in this path are invisible in production.

No complexity metric will flag any of these problems. They are not structural issues. They are semantic issues – problems with what the code does, not how it is formatted. A linter will not find them. A static analyser configured to check cyclomatic complexity will give this function a passing grade. Only a reviewer who understands the surrounding codebase, the security model, and the error handling conventions will spot that this simple function is actually dangerous.

This is the fundamental limitation of metrics: they measure the shape of the code, not its meaning. A function can be syntactically simple and semantically terrible. Metrics will not tell you the difference.

How AI review captures what metrics miss

AI code review tools operate at a different level from complexity metrics. Instead of counting tokens and branches, they read the code and reason about it. An LLM-based reviewer can identify that a function uses a different error handling pattern from the rest of the codebase. It can recognise that a caching implementation conflicts with the approach used elsewhere. It can flag a security concern based on how data flows through the function, not based on how many if statements it contains.

This is not to say that AI review replaces metrics. Metrics are fast, deterministic, and cheap. They run in milliseconds as part of a CI pipeline and provide a consistent baseline. A function that exceeds a complexity threshold genuinely deserves attention, and catching that automatically is valuable.

But metrics are tier one. They catch the obvious structural problems. The subtle semantic problems – the architectural inconsistencies, the security oversights, the design decisions that will cause pain six months from now – require a reviewer that understands what the code is trying to do. AI review provides that second tier of analysis. It reads the code the way a human reviewer would, evaluating intent and context rather than just counting syntax.

VibeRails combines both tiers. It reports on complexity where relevant, but its primary analysis is semantic: it evaluates code against categories like security, architecture, error handling, and consistency. The result is a set of findings that go beyond what any metric can measure, because they are grounded in understanding rather than counting.

Using metrics wisely

Complexity metrics are useful tools. They are not the final word on code quality.

Use cyclomatic complexity to identify functions that are difficult to test. Use cognitive complexity to identify functions that are difficult to read. Use Halstead metrics as a secondary indicator of functions that juggle too many concepts. Use lines of code as a simple sanity check. Use fan-in and fan-out to understand module dependencies.

But do not mistake a passing score for a clean bill of health. A codebase where every function has a cyclomatic complexity under 10 can still have critical security vulnerabilities, architectural inconsistencies, and design problems that no metric will ever detect. Metrics measure the surface. Understanding what lies beneath requires review – human, AI, or ideally both.

Limits and tradeoffs

- It can miss context. Treat findings as prompts for investigation, not verdicts.

- False positives happen. Plan a quick triage pass before you schedule work.

- Privacy depends on your model setup. If you use a cloud model, relevant code is sent to that provider; local models can keep inference on your own hardware.